Once you get the initial configurations loaded you’re ready to begin the lab. This is when the “fun” begins. Those of us who are used to starting labs with barebone configurations and searching for a few misconfigurations will be in for a bit of a shock. This is not how this troubleshooting will go. You’ll be looking at a fully configured network…which you did not build. It was at this point that I should have realized that this would not be easy and that the 2 hour time limit – which initially sounded like all of the time in the world – would be an issue.

When I tell you that you’re looking at a fully configured network, that means things like QoS, Multicast, and IP Services. You can start to see how difficult Cisco can make these labs. Throw in a number of devices that you cannot access and INE’s recommendation that you only use show and debug commands, and you’re looking at a bad day on the CLI.

The lab document starts with a “Baseline” section. This will give you a list of the devices under your control as well as details about how the network has been configured. This is broken down by well-known sections. For instance, Bridging and Switching might tell you which devices are STP roots, which VLANs are present, which flavor of STP is running, if and how VTP is set up, etc. The IGP section describes the routing protocols, any route filtering, redistribution (yes, there is plenty of that), etc.

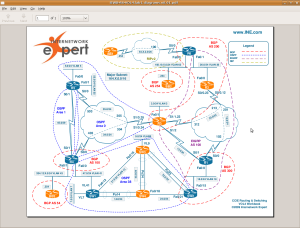

I read through the baseline and then started making network maps. INE has some nice examples of the maps that you’ll want to build and how long it should take in the solution guide:

We recommend making your own diagrams, including the following

information:

• IP addressing + IGPs.

• Layer 2 topology.

• BGP diagram.

• IPv6 topology.

• Multicast and Redistribution diagram.

Overall, don’t spend too much time building the baseline – the goal is to spend around 20 minutes. By the end of the baseline analysis phase, you should have clear understanding of the protocols and applications deployed in your network.

It took me a LOT longer than 20 minutes to get my head around what was going on in the network. It’s much harder to get quickly up to speed on a complex CCIE network when you haven’t built it from scratch. 😦

After getting a basic idea of what was going on, it was time to start looking at the tickets. There are ten tickets, each with a point value between 2 to 4 points. The total amount of points is 30 points, so each ticket will average 3 points. Like the “classic lab”, you’ll need to fix each issue completely – no partial points are awarded. There may also be tickets that you cannot resolve unless you’ve already fixed previous tickets. For example, ticket 10 in lab 1 has the following requirements:

Ticket 10: Multicast

Note: Prior to starting with this ticket make sure you resolved Tickets 4 and 5

Since you need 80% to pass the troubleshooting portion of the lab, you’ll need to get at least 24 points. This means that you can only really miss about 2 tickets (depending on point values).

Logging is turned off on the devices. I would strongly suggest enabling logging buffered on all devices(remove the configuration before finishing the lab). There are a number of logging messages that will point to some initial issues that you might miss if you’re not on the device when the log is generated. This way you can issue “show log” and see what’s going on.

Another suggestion: work on the tickets that seem easy first. Then work on any tickets that are requirements for other tickets. Finally, work on the tougher tickets last.

I used some of my basic, initial troubleshooting habits to find a couple of issues. In the lab – after building each section – I do basic troubleshooting. For instance, once all Layer 2 configuration is complete, I verify that I can ping across each link. After each IGP configuration, I verify that the proper routes are being advertised and received, as well as pinging (at least a subset) of the routes. I would suggest putting together a “toolkit” of common commands to run on each device when approaching the troubleshooting section such as ‘show ip int br | e ass’, ‘show ip protocol’, ‘show ip [protocol] route’, etc.

Let’s look one of the (easy) tickets from Lab 1:

Ticket 4: Connectivity Issue

• Another ticket from VLAN7 users. They cannot reach any resource on VLAN 5 – all IP Phones have unregistered, and nothing else works.

• However, they are still able to reach the local resources.

• Using the baseline description as your reference, resolve this issue in optimal manner.

3 Points

You will notice this issue if you do a Layer 2 check by pinging across directly connected links. Basically, you cannot ping from r4 to sw1 on VLAN41. Looking at sw1, I could see that the SVI interface for VLAN41 was not up. Sounds like an easy fix. Make sure that VLAN 41 has been added to the VLAN database.

Actually, it VLAN41 was in the VLAN database. The IP addressing was correct. All of the other SVIs were up and working. WTF?

Here is the configuration for the SVI:

interface Vlan41

ip address 164.16.47.7 255.255.255.0

ip access-group REMOTE_DESKTOP in

ip pim sparse-dense-mode

ntp broadcast client

ntp broadcast

Hmmm….I’ll bet that INE has a dastardly access-list configured. Let’s see the configuration for that sucker:

ip access-list extended REMOTE_DESKTOP

dynamic RDP timeout 10 permit tcp any host 164.16.7.100 eq 3389

deny tcp any host 164.16.7.100 eq 3389

permit ip any any

Oh fucking joy. A dynamic access-list. But I don’t see how this would be breaking connectivity, let alone keeping my SVI down. Just to be sure I removed the ACL. The SVI remained down, but I did catch a break when issued ‘no shut’ after readding the ACL:

*Mar 1 02:19:56.300: %SPANTREE-2-ROOTGUARD_BLOCK: Root guard blocking port FastEthernet0/14 on VLAN0041.

Interesting. According to our baseline guidelines sw1 should be the root bridge for all active VLANs. The initial configuration reflects this:

spanning-tree vlan 1-4094 priority 8192

Fa0/14 is a trunk link to sw2:

interface FastEthernet0/14

switchport trunk encapsulation dot1q

switchport mode trunk

spanning-tree guard root

So it looks like someone is generating a better BPDU for VLAN41. Wanna bet that it’s either sw3 or sw4 – the two switches that are restricted? 🙂

Rack16SW2#sh spanning-tree root

Root Hello Max Fwd

Vlan Root ID Cost Time Age Dly Root Port

—————- ——————– ——— —– — — ————

VLAN0001 8193 0017.0e3f.3900 19 2 20 15 Fa0/14

VLAN0003 8195 0017.0e3f.3900 19 2 20 15 Fa0/14

VLAN0005 8197 0017.0e3f.3900 19 2 20 15 Fa0/14

VLAN0007 8199 0017.0e3f.3900 19 2 20 15 Fa0/14

VLAN0009 8201 0017.0e3f.3900 19 2 20 15 Fa0/14

VLAN0013 8205 0017.0e3f.3900 19 2 20 15 Fa0/14

VLAN0018 8210 0017.0e3f.3900 19 2 20 15 Fa0/14

VLAN0026 8218 0017.0e3f.3900 19 2 20 15 Fa0/14

VLAN0041 4137 0012.4337.1880 19 2 20 15 Fa0/17 <-NOTE!

VLAN0043 8235 0017.0e3f.3900 19 2 20 15 Fa0/14

VLAN0055 8247 0017.0e3f.3900 19 2 20 15 Fa0/14

VLAN0062 8254 0017.0e3f.3900 19 2 20 15 Fa0/14

Rack16SW2#sh spanning-tree vlan 41

VLAN0041

Spanning tree enabled protocol ieee

Root ID Priority 4137 <-less than 8233 on sw1

Address 0012.4337.1880

Cost 19

Port 19 (FastEthernet0/17)

Hello Time 2 sec Max Age 20 sec Forward Delay 15 sec

Bridge ID Priority 32809 (priority 32768 sys-id-ext 41)

Address 001f.9e4a.fa00

Hello Time 2 sec Max Age 20 sec Forward Delay 15 sec

Aging Time 300

Interface Role Sts Cost Prio.Nbr Type

—————- —- — ——— ——– ——————————–

Fa0/4 Desg FWD 19 128.6 P2p

Fa0/14 Desg FWD 19 128.16 P2p

Fa0/17 Root FWD 19 128.19 P2p <-goes to sw3 not sw1!

I think that I’ve found my problem. sw3 is advertising a lower priority for VLAN 41. I went ahead and set the STP priority to 0 for ALL VLANs. INE chose to only change VLAN 41.

Me:

Rack16SW1(config)#spanning-tree vlan 1-4094 priority 0

INE:

spanning-tree vlan 41 priority 0

Either way, this unblocked f0/14 for VLAN41 and restored the STP instance on that VLAN, which brought up the SVI…which brought up IP connectivity. 🙂

*Mar 1 02:33:29.608: %SPANTREE-2-ROOTGUARD_UNBLOCK: Root guard unblocking port FastEthernet0/14 on VLAN0041.

That should give you an idea of one of the easier tickets. Even though this was a fairly easy issue, it threw me off my game because 99.99% of the time when I see an SVI down, it’s because the VLAN is not in the VLAN database.

In the final part of the review I’ll discuss the solution guide as well as my overall impressions.